Imagine this: You’ve just discovered a powerful new LLM that claims to think like a PhD researcher. Excited, you plug it into your project expecting magic.

But instead of groundbreaking insights, you get outdated answers, slow responses, random hallucinations, and a skyrocketing cloud bill.

Sound familiar?

That’s because an LLM alone is not enough.

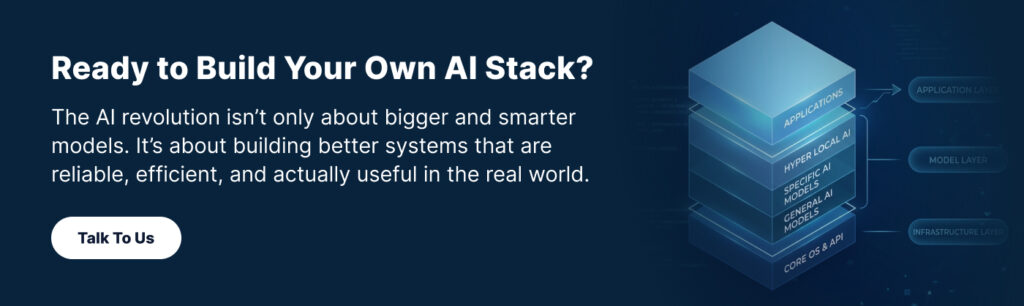

In 2026, building AI that actually solves real problems requires a full AI technology stack — a layered system where every component works together like a well-oiled machine.

Think of it as building a high-performance car. The LLM is the powerful engine, but without the right chassis (hardware), fuel system (data + RAG), smart transmission (orchestration), and driver-friendly controls (application layer), you’re not going anywhere fast, or safely.

In this guide, we’ll break down the five essential layers of the modern AI stack.

Why Understanding the AI Stack Is a Game-Changer

Whether you’re a developer prototyping on weekends, a startup founder, or part of an enterprise team, getting the full stack right determines whether your AI delivers:

- Accurate, trustworthy results

- Lightning-fast responses

- Affordable scaling

- Strong safety and compliance

Skip a layer, and you’ll quickly hit frustrating roadblocks.

The 5 Layers of the AI Stack Explained

Let’s dive into each layer — from the foundational hardware all the way up to what users actually see and touch.

1. Infrastructure Layer: The Power Under the Hood

Large language models are compute-hungry beasts. They rarely run well on ordinary CPUs or basic laptops.

This bottom layer handles the hardware:

- GPUs and specialized AI accelerators

- Deployment choices:

- On-premise — full control and data sovereignty (but higher upfront cost)

- Cloud — elastic scaling and pay-as-you-go flexibility

- Local/Edge — smaller models running directly on laptops or devices for low latency or offline scenarios

Quick tip: Your infrastructure choice dramatically affects speed, cost, and even regulatory compliance. Choose wrong, and even the best model will feel sluggish or expensive.

2. Models Layer: Choosing the Right Brain

This is the most hyped layer — the actual LLMs or smaller, specialized models (SLMs).

Key decisions include:

- Open-source (like Llama or Mistral) vs. proprietary (GPT, Claude, or IBM’s Granite models)

- Model size — massive models for deep reasoning vs. lightweight ones that run cheaper and faster

- Specialization — some excel at code, reasoning, tool use, or domain expertise (science, finance, legal, etc.)

With millions of models available on Hugging Face and beyond, the options can feel overwhelming. The smart move? Match the model to your specific use case and infrastructure constraints.

3. Data Layer: Giving Your AI Fresh, Relevant Knowledge

Most LLMs have a knowledge cutoff date. They don’t magically know about last week’s research papers, your internal company docs, or breaking news.

Enter the data layer and its star player: Retrieval-Augmented Generation (RAG).

Here’s how it works in simple terms:

- Your documents get turned into embeddings and stored in a vector database

- When a user asks a question, the system quickly retrieves the most relevant chunks

- Those chunks are added to the prompt, so the model generates grounded, up-to-date answers

Real-world example: Building an AI assistant for drug discovery researchers. The model alone can’t know about scientific papers published in the past three months — but with a solid RAG setup pulling from a vector database, it suddenly becomes incredibly useful.

This layer is often the secret sauce that slashes hallucinations and boosts accuracy.

4. Orchestration Layer: Turning One-Shot Answers into Smart Workflows

Simple chat prompts work for basic tasks. But complex, real-world problems need planning, tool use, and self-correction.

The orchestration layer acts like a smart project manager. It breaks down user requests into steps such as:

- Planning and reasoning

- Executing tools or function calls

- Reviewing outputs and running feedback loops for better results

In 2026, this layer is exploding with frameworks like LangChain, LangGraph, CrewAI, and others. It’s what transforms a basic chatbot into reliable agentic AI that can handle multi-step workflows autonomously.

5. Application Layer: Where the Magic Meets the User

At the end of the day, real people (or other systems) need to interact with your AI.

This top layer covers:

- Clean, intuitive interfaces (chat, voice, multimodal inputs)

- Helpful features like citations, editable outputs, revision history, and feedback mechanisms

- Seamless integrations with existing tools and workflows

A technically brilliant stack can still flop if the user experience feels clunky.

How the Layers Work Together in Real Life

Picture the drug discovery assistant again:

- Powerful GPUs in the cloud keep everything running fast (Infrastructure)

- A specialized reasoning model handles complex analysis (Models)

- Latest papers are retrieved via vector search and RAG (Data)

- The system plans the research steps, summarizes findings, and double-checks accuracy (Orchestration)

- Researchers get a polished interface with export options and citations (Application)

When all five layers click, you get AI that doesn’t just “generate text” — it delivers real value.

Conclusion: The AI Stack Is the Real Differentiator

The AI revolution isn’t just about chasing bigger and smarter models. It’s about building better systems — systems that are reliable, efficient, cost-effective, and truly useful in the real world.

When all five layers work in harmony, your AI moves beyond simple text generation to deliver meaningful outcomes. Whether you’re analyzing the latest research papers, automating business processes, or creating innovative applications, a solid AI stack is what separates experimental prototypes from production-grade solutions that drive real value.

The choices you make across infrastructure, models, data, orchestration, and application directly shape your project’s quality, speed, cost, and safety.

Start simple, experiment thoughtfully, and build with the full stack in mind — that’s how you stay ahead in 2026 and beyond.