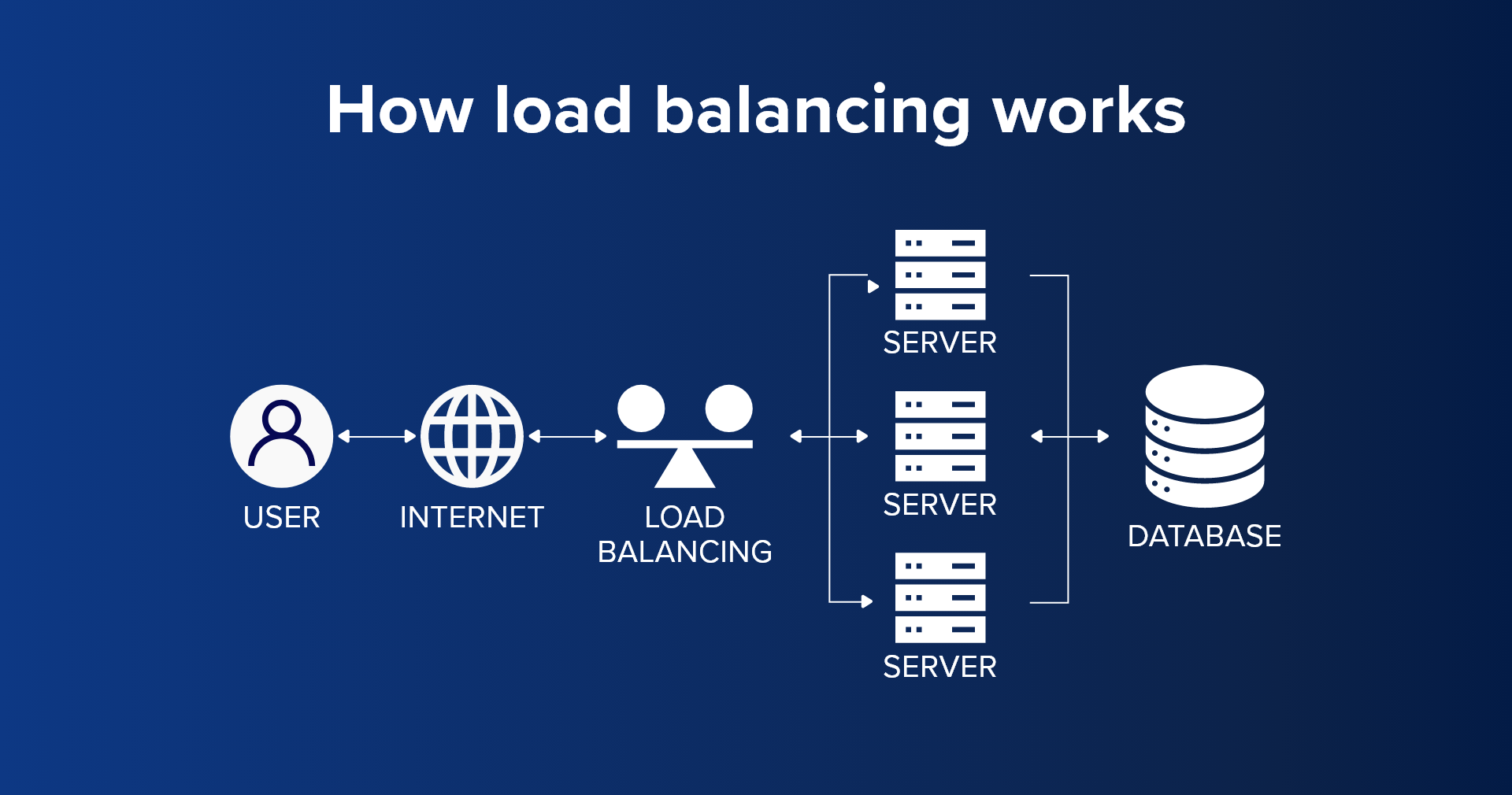

When cloud-based websites and applications start to gain high traffic to the order of millions of user requests every day, cloud load balancing becomes an essential capability to ensure the stability, efficiency, and reliability of a cloud application. Without load balancing a single server or instance may get overwhelmed and fail, leading to substantial latency and possible user outage.

What is Cloud Load Balancing?

Cloud load balancing is the process of distributing traffic, workloads, and client requests evenly across multiple servers, networks, or other resources in a cloud environment. This approach enhances cloud optimization by ensuring each resource manages a reasonable load, preventing overloads or underutilization of machines or servers in the cloud environment.

Benefits of Load Balancing

Load balancing efficiently manages and directs the flow of traffic between client and server. It prevents requests from clients queuing up over a single resource by automatically redirecting them to available resources. This ensures availability, scalability, and improves the performance of an application. Additionally, load balancers come with built-in security features specially programmed to deal with denial-of-service attacks.

Traditional load balancing solutions were hardware based depending upon dedicated hardware housed in data centers. Datacenter based solutions come with the cost component of maintaining an experienced team of IT professionals for the upkeep of the load balancers and other infrastructure. There is also a cost involved with scaling if more and more load balancers are to be added. Cloud load balancing uses software-based load balancers. It brings in the scalability and flexibility that the cloud offers in itself.

Availability

Load balancers perform application health checks periodically to detect issues that can cause downtime. They maintain and upkeep the server and upgrades without creating downtime. Whenever some incident happens load balancer backup the data to another of the available servers preventing complete loss of data.

Scalability

Load balancer enables applications to handle thousands of requests by redirecting network traffic among multiple servers. It can predict the changes in application traffic and servers can be accordingly added or removed ensuring seamless application scalability.

Performance

Load balancers increase response time and reduce network latency to improve performance. Distribution of load between several servers improves application performance. Additionally, they redirect the traffic to geographically close servers and reduce latency.

Reliability

Cloud offers the power to host an application around multiple cloud hubs around the globe. This allows load balancers to direct the traffic to any of the servers outside a geographic region facing an outage.

Security

Cloud-based load balancers come with built-in security features. They are specially designed to prevent attacks like denial of service attacks that flood the server with millions of concurrent requests causing server failure. In a DoS attack load balancers redirect attack traffic to multiple servers minimizing the impact. Additionally, they always monitor traffic and route through a group of network firewalls to prevent malicious content from entering the server.

Types of Cloud Load Balancing Algorithm

Static Algorithm

Static load balancing algorithms fix the server resources at the beginning of implementation. Load balancing starts only after the resource distribution has been created so an in-depth knowledge of server resources is important from the beginning. Such algorithms work well for systems with very little variation in load. The entire traffic is equally distributed between the servers.

Round Robin

In a round robin method, each incoming request from the client is sent to different servers in a round robin fashion one after the other.

Weighted Round Robin

The weighted round robin method assigns weight to each server based on their capacity with servers of higher capacity getting higher weight. Requests are assigned based on this allowing workload to be distributed based on capacity.

IP Hash

A unique key is generated by performing a hashing function on the client IP addresses. Servers are mapped to the client based on the hash key. Requests are accordingly forwarded to the matched servers.

Dynamic Algorithm

Dynamic load balancing algorithm checks the current state of the system to spot the lightest server and prioritizes it for load balancing. This leads processes to switch from a highly occupied machine to an underutilized one in realtime. Dynamic load balancing algorithms are in real-time communication with the network.

Least Connections

The least connections method checks for the number of open connections for servers. Traffic is sent to the ones with the least number of open connections. It is assumed all connections require the same server computing power.

Least Response Time

Response time is the time elapsed between a request being generated and a response is sent back to the client. The method combines response time and the active connections to determine a server to process a request.

Cloud Load Balancing Functionalities

Load balancers play a crucial role in optimizing network traffic and enhancing application performance. They are essential for distributing workloads efficiently across servers to meet user demand.

Network Load Balancers

Network load balancers focus on optimizing traffic and minimizing latency across local and wide-area networks. By leveraging network information such as IP addresses, destination ports, and protocols like TCP and UDP, they intelligently route network traffic to ensure ample throughput for user satisfaction.

Application Load Balancers

Application load balancers prioritize the efficient routing of API request traffic based on application content, including URLs, SSL sessions, and HTTP headers. Examining application-level content enables these load balancers to identify servers with duplicate functions and direct requests to those that can quickly and reliably fulfill specific requirements.

Virtual Load Balancers

In the era of virtualization and technologies like VMware, virtual load balancers have gained prominence. They optimize traffic across servers, virtual machines, and containers. Open-source container orchestration tools like Kubernetes provide virtual load balancing capabilities, facilitating the routing of requests between nodes in a cluster.

Global Server Load Balancers

Global server load balancers excel in routing traffic to servers across multiple geographic locations, ensuring uninterrupted application availability. They intelligently assign user requests to the closest available server, and in the event of a server failure, redirect traffic to another location with an available server. This failover capability makes global server load balancing a valuable component of disaster recovery strategies.

What Is Cloud Load Balancing as a Service (LBaaS)?

For businesses managing ever-growing online traffic, traditional load balancing appliances often become incompetent. There is an escalating hardware cost, configuration is time-consuming, and maintenance demands constant monitoring. Load Balancing-as-a-Service (LBaaS) is a cloud-based revolution that’s transforming the way to distribute server workloads.

LBaaS routes incoming traffic to the best-suited server in a cloud environment. Unlike the static confines of an on-premise data center, there is unparalleled scalability and flexibility. LBaaS seamlessly scales up and down on-demand, ensuring optimal performance without expensive, pre-provisioned infrastructure.

As traffic automatically reroutes around any server hiccups, high availability becomes effortless, guaranteeing service continuity even for mission-critical applications. Reduced costs are another key perk. Ditch the hefty upfront investment in dedicated appliances and say goodbye to ongoing maintenance headaches. Pay-as-you-go pricing models let you scale your spending alongside your traffic demands, maximizing efficiency and budget control.

What AWS Offers for Cloud Load Balancing?

AWS offers Elastic Load Balancing (ELB) that automatically reroutes incoming traffic to registered targets across the AWS ecosystem, third-party virtual appliances, and on-premise data centers. It is a fully managed load balancing service that doesn’t require complex configuration or setting up API gateways. ELB supports setting up four different types of load balancers:

- Application Load Balancer routes traffic for HTTP-based requests.

- Network Load Balancer routes traffic based on IP addresses.

- Gateway Load Balancer routes traffic to third-party virtual appliances.

- Classic Load Balancer routes traffic to applications in the Amazon EC2-Classic network

Solutions to Handle Cloud Traffic Other Than Cloud Load Balancing

- Autoscaling: Automatically scales compute resources based on demand.

- Content delivery networks (CDNs): Cache static content closer to users for faster delivery.

- API gateways: Manage and secure API access for microservices architectures.

Conclusion

Cloud load balancing is an effective tool to distribute millions of incoming requests in a cost-effective manner. The power of the cloud allows the flexibility to scale an application as much as required and distribute requests over globally hosted servers.

A cloud load balancer serves as a linchpin for organizations seeking to achieve optimal performance, scalability, and resilience for their applications in the cloud. By intelligently distributing incoming traffic, adapting to changing workloads, and providing fault tolerance mechanisms, cloud load balancers contribute significantly to the seamless operation of applications in the dynamic landscape of cloud computing.